May 2019 (Volume 28, Number 5)

Quantum Computing Scientists: Give Them Lemons, They’ll Make Lemonade

By Sophia Chen

At the close of 2018, U.S. quantum computing researchers got their holiday wish. Following a six-month legislative process, President Trump signed the National Quantum Initiative Act into law. The law sets forth a plan to inject $1.2 billion of investment into quantum technologies. This expected infusion of cash, spread over the next five years, will fund the development of new quantum devices, building upon the existing prototype quantum computers from companies such as Google, Intel, and IBM.

Now, the hard part: developing a quantum computer actually capable of surpassing non-quantum technology. So far, researchers have only been able to demonstrate algorithms on their early devices that classical computers can still handle, such as simulating three-atom molecules. At this year’s APS March meeting in Boston, researchers discussed bite-size projects for the era ahead. They’ve already come up with a new acronym: NISQ, for Noisy Intermediate-Scale Quantum Computing.

Researchers still loosely define the term, roughly describing a NISQ computer as one that doesn’t have “full-blown error correction,” says quantum algorithm researcher Kristan Temme of IBM. All existing quantum computers fall under this description. They can’t execute arbitrarily long sequences of logic gates due to hardware limitations. In other words, a naively designed algorithm will result in the hardware delivering the wrong final answer.

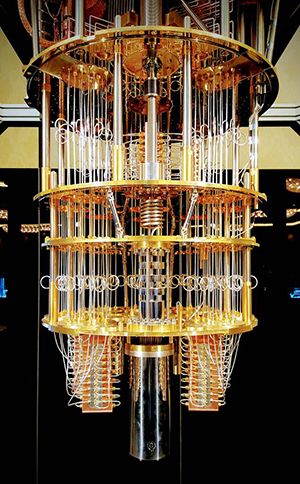

IBM

IBM’s quantum computers attempt to tackle the problem of noise and error correction.

Imagine programming the supercomputing qubits in IBM’s computer into an initial quantum state, where each qubit corresponds to some superposition of 1 and 0. To complete some computing task, you would apply microwave pulses corresponding to a sequence of logic gates to manipulate the values of each qubit. However, errors will occur during this process, like a qubit winding up in the wrong superposition. But unlike in conventional computing, which corrects errors by coding redundant data, quantum states cannot be duplicated. And while experts have begun designing special quantum error correction algorithms, they have not yet successfully executed their ideas on hardware. Without error correction, the qubits end up in a final state that does not correspond to what the algorithm developer intended.

These errors arise from many different sources, says Temme. It could be that a logic gate happens to perform badly. The best logic gates perform operations correctly just shy of 100 percent of the time, so in long algorithms, statistical errors add up. In addition, sometimes a microwave pulse will alter the value of a qubit that is not supposed to be part of an operation, a scenario known as crosstalk. On top of these errors, the qubit’s information also suffers from a short lifespan. The qubit’s quantum-ness, or coherence, only lasts up to about 100 microseconds.

So NISQ researchers have chosen pragmatism: to accept these flawed machines, for now. “The question is, can you still do something interesting with those devices?” says Temme.

He’s betting yes. In the last couple years, Temme and his colleagues have published several papers on how to make the most of what they have. The general strategy in the NISQ era is to design algorithms that take the hardware’s quirks into account. For example, Temme recommends designing algorithms that use short sequences of logic gates, known as short-depth circuits. “Typically, you go up to a fraction of the coherence time, say 50 percent, and then you count how many gates you can fit in,” he says.

Another approach is to design a hybrid algorithm that uses both classical and quantum computers, says algorithm researcher Jarrod McClean of Google. One popular algorithm with chemistry applications approximates the ground state of a molecule. To start, the user first programs an approximation of the ground state into the qubits. The quantum computer improves the initial guess by applying a sequence of logic gates that depends on a set of parameters, analogous to weights in a neural network. Then, the state is fed to a classical computer, which then instructs the user how to tweak those weights in the quantum computer. Then, the entire process iterates in an automated process.

McClean is trying to figure out how optimize that initial guess for the ground state. Some researchers choose a random state and set of logic gates to start with, because it is more forgiving on the hardware. But this strategy can get buggy, as McClean and his colleagues reported in Nature Communications last year. Some random guesses can cause the quantum computer to get stuck and they have identified ways to avoid the logjam.

Researchers are also looking at whether NISQ computers can benefit classical machine learning algorithms. Temme’s group has uncovered some tantalizing hints, published in Nature in March, that a NISQ machine could be better at classifying certain types of data. They engineered a classical data set to contain patterns that two qubits of a quantum computer were able to classify into two groups easily. While the classification task was simple enough for a classical computer to handle, Temme says the demonstration is a step toward the potential advantage of NISQ.

On the hardware side, researchers still can’t tell which type of qubit—superconducting loop, ion, neutral atom, quantum dot—works best. For one, they still haven’t settled on clear metrics to compare different devices. Many popular articles quote qubit number to represent how powerful the computer is, but this shorthand can be misleading because it doesn’t consider the quality of each qubit, says Temme. A finely tuned 10-qubit computer could be more powerful than an error-ridden 50-qubit one, for example. IBM has developed a metric called “quantum volume,” which takes a variety of factors such as qubit number and gate fidelity into account, but other researchers have not adopted it widely.

But with the promise of new funding, you can expect an animated field in the next few years. McClean thinks that the era of noisy quantum computers will last a minimum of five years, depending on when researchers can successfully implement error correction. Meanwhile, they’ll make do with what they have.

The author is a freelance science writer based in Tucson, Arizona.

©1995 - 2024, AMERICAN PHYSICAL SOCIETY

APS encourages the redistribution of the materials included in this newspaper provided that attribution to the source is noted and the materials are not truncated or changed.

Editor: David Voss

Staff Science Writer: Leah Poffenberger

Contributing Correspondent: Alaina G. Levine

Publication Designer and Production: Nancy Bennett-Karasik

May 2019 (Volume 28, Number 5)

Articles in this Issue