By Sophia Chen

Most computers perform tasks by propagating electronic signals on silicon chips. But researchers are currently pursuing different computing frameworks, some inspired by biological systems, that could offer advantages in robotics or biomedical applications. Many of these new frameworks make use of programmable matter—materials designed to change their properties in response to varied inputs. This year’s virtual APS March Meeting included several talks on developments in programmable matter.

The term “programmable matter” was coined in 1991 by physicist Norman Margolus and computer scientist Tommaso Toffoli. Margolus and Toffoli used the phrase to describe CAM-8, a cellular automata machine they’d designed that can be thought of as an array of pixels. Algorithms for this machine made use of the pixels’ spatial arrangement, and each cell or pixel had specific rules for interacting with the others. Research suggested this type of machine could efficiently simulate fluid flow and chemical reactions.

Over the last three decades, the term has now evolved to describe materials that can change their physical properties—shape, elasticity, or electrical properties, to name a few—based on prearranged triggers. One 2015 demonstration from the Massachusetts Institute of Technology involved a self-assembling origami robot that could also self-destruct: a sheet that folded up into a tiny shape to move around, carry loads, and dissolve itself in acetone.

Sam Dillavou

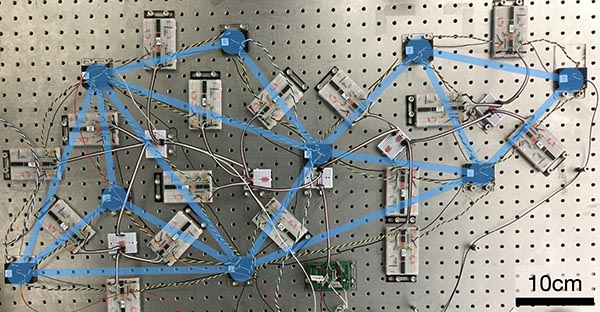

Researchers at the University of Pennsylvania have developed an electrical circuit that learned to identify different kinds of iris flowers.

At the 2021 March Meeting, postdoctoral researcher Sam Dillavou of the University of Pennsylvania described an electrical circuit that “learned” to adjust its own resistance to produce a desired voltage output without top-down instruction.

The system, which relied on a theoretical framework by postdoctoral researcher Menachem Stern of University of Pennsylvania, used two identical circuits with variable resistors that were both set to the same initial values. Dillavou applied the same voltage to each circuit but biased one of them to produce a voltage output closer to the desired value. By comparing the power dissipation of the two circuits, both circuits adjust their resistors to produce the desired voltage.

Dillavou demonstrated that this circuit could perform a simple machine learning task using a historical dataset of measurements of 150 iris flowers. Given four dimensional measurements of an iris, the circuit learned to produce a voltage that indicated which of three types of iris the measurements came from. The circuit first learned the correct classification for 30 iris examples. It could then classify the other 120 flowers with 95 percent accuracy. In effect, the circuit performed “supervised learning”—a common method in artificial intelligence today in which a computer learns to identify a cat after being shown many labeled photos of cats.

In addition, the circuit still worked when they damaged it. Dillavou cut wires in the circuit, and after additional training, it was still able to generate the specified voltage. While the system is far less complex than a brain, Dillavou says it is “more reminiscent of a biological system than, say, someone sawing an iPhone in half.”

Future versions of this circuit could be useful for a remotely controlled planetary rover, Dillavou and Stern say. “You can just put it wherever it needs to go and then train it in situ to respond to the signals,” said Stern. In addition, if part of the system gets smashed while deployed on another planet, it could still work.

At the meeting, Michelle Berry, a fourth-year PhD student at Syracuse University, presented theoretical research on a mechanical computer. Such a device would execute logic like a conventional computer but with physical forces instead of electrical signals. For example, the device could be designed to sense a force in its surroundings, which could initiate subsequent motion to allow the device to execute some task.

Berry studies networks of rigid rods connected by joints—similar to K’nex, the children’s toy—and how forces travel through the network. In particular, she is developing a mechanical analog of a transistor. This is an area of the network that lets force propagate only under a specified condition, like a switch. In theory, you can connect these mechanical transistors to form mechanical logic gates, although Berry says these are still experimentally difficult to build.

A mechanical computer based on these jointed rods would also be far less complex than a regular computer, but it could offer advantages for specific applications. Berry names potential biomedical applications inside of a patient’s body where “you don't want electronics, and you don't need crazy processing power,” she says.

Nidhi Pashine designs and studies metamaterials that mimic a protein mechanism known as allosteric regulation. In allosteric regulation, a molecule binds to a site on a protein, often changing the protein’s shape and allowing another site on the protein to become active. “This site [becomes] capable of undergoing a chemical reaction that it couldn’t do before,” says Pashine, who recently received her PhD from the University of Chicago.

Pashine’s metamaterials, made of rubber, form a net composed of polygons resembling a spider web. This arrangement of polygons is random: its disorder is inspired by the arrangement of atoms in glass.

Pashine engineers each metamaterial to respond to force in a specific way. If you pull at one site on the metamaterial, the force propagates through the material to induce a desired motion on the other end. It’s like if opening someone’s front door caused a window to shut on the other side of the house, Pashine describes.

Typically, researchers use simulations to design their metamaterials, which fail to capture the complexity of real-world materials. Pashine came up with an experiment-based algorithm that avoids the limitations of simulations. In her method, she designs her network to respond correctly by removing links in the network based on measurements of the stresses in the metamaterial.

Pashine says that “pruning” a random network to create a metamaterial also offers design advantages compared to designing the network from the ground up. “It's much easier if you start with something random and then modify it,” she says.

Video recordings of these and other programmable matter presentations will be available on the meeting website (march.aps.org) until June 19, 2021.

The author is a freelance science writer based in Columbus, Ohio.

©1995 - 2024, AMERICAN PHYSICAL SOCIETY

APS encourages the redistribution of the materials included in this newspaper provided that attribution to the source is noted and the materials are not truncated or changed.

Editor: David Voss

Staff Science Writer: Leah Poffenberger

Contributing Correspondents: Sophia Chen, Alaina G. Levine

May 2021 (Volume 30, Number 5)

Articles in this Issue